AI scribes may save time, but in medicine, speed means very little without trust.

For many doctors, the promise is obvious. Less time staring at the screen. Less after-hours charting. More eye contact with patients. More energy left at the end of the day. Ambient documentation has become one of the clearest real-world AI use cases in medicine, and McKinsey estimated in early 2026 that 10% or more of U.S. physicians have adopted ambient scribing solutions.

But adoption is not the same as trust.

Physicians are right to ask harder questions. What exactly is an AI scribe listening to? How accurate is the note? What happens when the output is wrong? Do patients know it is being used? Does it truly save time, or does it just shift the burden into a new kind of review work?

Those are the right questions, because AI scribes are not magic. They are tools. And like most tools in medicine, their value depends on where they help, where they fail, and how carefully they are used.

So, should physicians trust AI scribes? The answer might unsettle you…

Disclaimer: While these are general suggestions, it’s important to conduct thorough research and due diligence when selecting AI tools. We do not endorse or promote any specific AI tools mentioned here. This article is for educational and informational purposes only. It is not intended to provide legal, financial, or clinical advice. Always comply with HIPAA and institutional policies. For any decisions that impact patient care or finances, consult a qualified professional.

1. AI scribes solve a real problem, and that is why they are gaining traction

The appeal of AI scribes is easy to understand because the problem they address is painfully familiar.

Documentation is one of the most persistent sources of physician frustration. It slows visits, pulls attention away from patients, and extends the workday long after clinic is over. If an AI tool can meaningfully reduce that burden, it gets instant relevance.

That is exactly why ambient documentation has moved so quickly from curiosity to implementation. McKinsey described ambient scribing as a compelling solution with “tangible and measurable value” for health systems and clinicians, especially in reducing documentation burden and streamlining related administrative work.

The American Medical Association has also highlighted large-scale use. In one 2025 report, The Permanente Medical Group said its ambient AI scribes were used 2.5 million times in one year, helping save about 15,000 hours while easing documentation burden and improving communication.

That does not mean every physician experience will look like that. Large systems have different resources, training structures, workflows, and integration capabilities than smaller practices. But it does show that AI scribes are not just theoretical. They are already being used at scale, and enough organizations are seeing value to keep pushing forward.

The trust question starts here: physicians are not being asked to believe in a futuristic concept. They are being asked to evaluate a tool that is already proving useful in at least some real-world settings.

And that matters because it separates AI scribes from a lot of healthcare AI hype.

The case for AI scribes is not that they replace physicians. It is that they may reduce friction around one of the most draining parts of practice. When these tools work well, they can give physicians more room to focus on listening, thinking, connecting, and making clinical decisions instead of trying to capture every detail manually. AMA reporting has emphasized that ambient listening can enhance clinician-patient interaction by reducing administrative burden and helping physicians stay more present.

That is the strongest argument in their favor. Not novelty. Not trendiness. But relief.

2. Trust should not be blind, because the risks are real

The strongest case against uncritical trust is also straightforward: documentation in medicine is not a casual task.

Clinical notes carry legal, operational, billing, and patient-care consequences. If an AI scribe makes an error, inserts something that was never said, misses an important nuance, or creates a misleading summary, the physician is still the one responsible for the record.

That is why the central rule around AI scribes should be simple: never confuse assistance with accountability.

The AMA has been explicit that physicians should review AI-generated documentation carefully, explain the use of AI to patients, obtain consent when appropriate, and reassure patients about privacy and compliance. The AMA Journal of Ethics has also emphasized informed consent, the patient-clinician relationship, and the ways ambient listening tools can influence documentation practices and conversations in the exam room.

Those issues are not minor. There are at least four practical concerns physicians should keep in mind.

- First is accuracy. AI-generated notes can sound polished even when they are incomplete or wrong. A clean sentence is not the same as a correct record. If a tool overstates certainty, misses context, or rephrases something in a clinically misleading way, that can create real downstream problems. That is exactly why AI tool evaluation matters more than polished demos.

- Second is privacy and consent. These tools often rely on passive audio capture in encounters that may include sensitive information. Patients deserve to know when AI is part of the documentation process. They also deserve clarity on what is being recorded, how it is used, and what protections are in place. That concern overlaps with broader questions around ChatGPT privacy and medical AI safety.

- Third is legal and compliance risk. Reuters reported in January 2026 that ambient scribing is creating new legal questions around consent, data sharing with third-party vendors, and review of AI-generated records, with lawsuits and state-level requirements already shaping the landscape. For physicians, this is where AI legal safety stops being theoretical.

- Fourth is false efficiency. A tool may look impressive in a demo and still fail the real test if it creates too much supervision burden. If a physician has to heavily edit every note, double-check every detail, and worry about what was omitted, the promised time savings may shrink quickly.

This is where trust has to be earned. Not by vendor claims. Not by sleek interfaces. By consistent, reviewable performance in real workflows.

A physician should not ask, “Do I trust AI?”

A physician should ask, “Do I trust this tool, in this setting, for this task, with these safeguards?”

That is a much better question.

3. The smartest stance is selective trust with strong guardrails

So if blind trust is a mistake, does that mean physicians should avoid AI scribes altogether?

No.

It means they should use them like professionals evaluating any other clinical tool: with judgment, boundaries, and clear standards.

Selective trust is probably the right model.

AI scribes seem most promising when they are used for what they are best at: helping reduce repetitive documentation burden while leaving final review, approval, and responsibility with the clinician. That is very different from treating them as autonomous note writers that can be accepted without scrutiny.

The healthiest way to think about an AI scribe is as a documentation assistant, not a substitute for clinical authorship. That mindset changes how physicians evaluate success.

A good AI scribe should:

- reduce keystrokes and clicks

- preserve or improve note quality

- support better patient attention during visits

- lower after-hours documentation burden

- fit naturally into workflow without requiring constant rescue

A bad AI scribe may still look impressive at first, but it will usually reveal itself through one of three things:

- too many factual or contextual errors

- too much editing required

- too little transparency about privacy, consent, or data handling

The good news is that health systems and physician groups appear to be learning this in real time. AMA reporting on health systems moving into AI has stressed coordinated deployment, caution, and the importance of practical use cases over broad claims. Even the optimistic coverage is usually paired with reminders about physician oversight and implementation discipline.

That is the right tone. Physicians do not need to be anti-AI. But they should be anti-sloppiness.

For many, the real question is not whether to trust AI scribes in the abstract. It is whether the tool improves the work enough to justify adoption without compromising trust, privacy, or documentation integrity. That broader conversation fits into what many physicians are still learning about healthcare AI and the future of medical technology.

That evaluation should be practical.

- Does it make the day better?

- Does it reduce burnout pressure?

- Does it help the physician stay more present?

- Does it preserve patient confidence?

- Does it hold up under review?

If yes, then trust may be warranted in a bounded, responsible way.

If not, then the technology may still be interesting, but it is not ready for prime time in that setting.

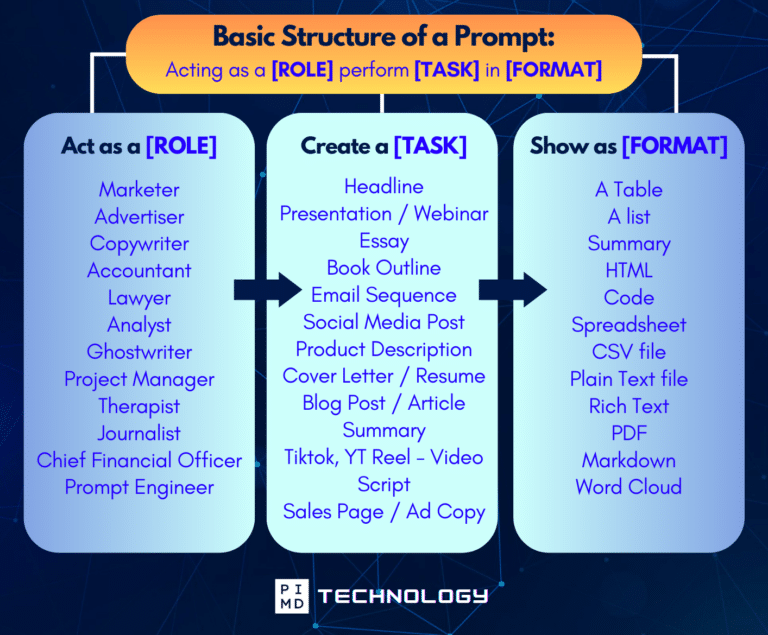

Unlock the Full Power of ChatGPT With This Copy-and-Paste Prompt Formula!

Download the Complete ChatGPT Cheat Sheet! Your go-to guide to writing better, faster prompts in seconds. Whether you’re crafting emails, social posts, or presentations, just follow the formula to get results instantly.

Save time. Get clarity. Create smarter.

3. Physicians should care, but they should care carefully

The case for paying attention to AI agents is strong. The case for trusting them blindly? Not so much.

Do not ask, “Is AI good or bad?” Ask, “Where is this safe, useful, and worth the tradeoff?”

Healthcare is a high-stakes environment. Even when AI sounds polished, physicians are still responsible for what they sign, what they approve, and what touches patient care. That is exactly why physicians also need to understand the compliance side of using AI without getting sued.

Well, how do we use it then? Your mindset around AI agents should be practical, not ideological.

Some tasks are obviously better fits than others. Low-risk, repetitive, rules-based, reviewable work is the natural starting point. Think scheduling logic, information gathering, message drafting, documentation support, chart summaries, and process coordination. These are the kinds of tasks where speed and consistency matter, and where a human can stay in the loop without having to rebuild the whole thing from scratch.

Higher-risk clinical judgment is different. Nuanced decisions, patient-specific reasoning, and anything with meaningful downstream consequences should clear a much higher bar. In those settings, AI may be useful as support… but not as a substitute for judgment.

This is also where governance matters.

The real question is not just what an agent can do. It’s what it should be allowed to do, what data it can access, how its output gets reviewed, and what happens when it fails. That’s not boring compliance talk. That’s the whole ballgame in healthcare.

So what should physicians watch for?

- First, be skeptical of vague claims. If a vendor says a tool is “agentic” but can’t clearly explain the workflow, oversight, failure points, or review process, that’s a red flag.

- Second, look for narrow wins before big promises. A tool that reliably fixes one annoying workflow is usually more valuable than one that claims it will reinvent the entire practice. (We’ve all seen that movie before.)

- Third, measure the supervision burden. If an agent saves five minutes but creates ten minutes of verification work, it’s not helping.

- Fourth, don’t confuse a polished interface with real capability. A slick demo is nice. Operational reliability is nicer.

- And fifth, remember that strategy matters more than novelty. The physicians who benefit most from these tools will probably be the ones who understand their workflow problems clearly before they go shopping for solutions.

Long term, that is part of becoming one of the AI-literate doctors who will win. That may be the most important mindset shift of all.

AI agents are not a reason to abandon professional judgment. They’re a reason to become more intentional about where your judgment is most valuable.

Final Thoughts

Should physicians trust AI scribes?

As of now, if it’s the right tool, then yes, BUT trust them the way physicians should trust any meaningful clinical technology: cautiously, selectively, and with oversight.

AI scribes are gaining traction because they address a real pain point. The documentation burden is real, and early large-scale examples suggest these tools can reduce friction and save time.

But the risks are also real. Accuracy matters. Consent matters. Privacy matters. Review matters. Trust should never mean handing over responsibility.

For physicians, the smartest position is neither enthusiastic surrender nor blanket rejection.

It is discernment.

- Use the tools that clearly reduce burden.

- Question the ones that create new risks.

- Keep humans accountable.

- And remember that the goal is not just faster notes.

It is better medicine, better attention, and a better experience for both doctor and patient. But what do you think? Let us hear your thoughts down in the comments!

Download The Physician’s Starter Guide to AI – a free, easy-to-digest resource that walks you through smart ways to integrate tools like ChatGPT into your professional and personal life. Whether you’re AI-curious or already experimenting, this guide will save you time, stress, and maybe even a little sanity.

Want more tips to sharpen your AI skills? Subscribe to our newsletter for exclusive insights and practical advice. You’ll also get access to our free AI resource page, packed with AI tools and tutorials to help you have more in life outside of medicine. Let’s make life easier, one prompt at a time. Make it happen!

Disclaimer: The information provided here is based on available public data and may not be entirely accurate or up-to-date. It’s recommended to contact the respective companies/individuals for detailed information on features, pricing, and availability. All screenshots are used under the principles of fair use for editorial, educational, or commentary purposes. All trademarks and copyrights belong to their respective owners.

If you want more content like this, make sure you subscribe to our newsletter to get updates on the latest trends for AI, tech, and so much more.

Further Reading