Chatbots can talk. Agents can work. That difference is about to matter a TON to physicians.

Most doctors no longer need convincing that AI is real. They’ve seen it write emails, summarize notes, and generate decent text. The better question now is this: what happens when AI stops acting like a chatbot and starts acting more like a worker?

That’s the shift behind AI agents.

An AI agent isn’t just a tool waiting for a prompt. It’s built to pursue a goal. It can gather information, make decisions within clear boundaries, use software and tools, and keep a task moving with less hand-holding from a human. OpenAI describes agents as systems that can accomplish tasks on your behalf using models, tools, and guardrails. For physicians, that distinction matters.

A chatbot helps you think. An agent helps you do.

That doesn’t mean AI agents are ready to run a medical practice or replace doctors. Not even close. But they are becoming more relevant to the work around medicine… documentation, inbox management, chart prep, scheduling, follow-up workflows, coding support, prior auths, and the endless admin coordination that eats up time and energy.

That broader shift also fits with what many doctors are already seeing in AI in medicine today. Why should physicians care?

Because the next real productivity gains in medicine may not come from AI that simply gives better answers. They may come from AI that reduces friction across the broken workflows we deal with every day.

Disclaimer: While these are general suggestions, it’s important to conduct thorough research and due diligence when selecting AI tools. We do not endorse or promote any specific AI tools mentioned here. This article is for educational and informational purposes only. It is not intended to provide legal, financial, or clinical advice. Always comply with HIPAA and institutional policies. For any decisions that impact patient care or finances, consult a qualified professional.

1. AI agents are different from chatbots, and that difference matters

Most physicians have already met AI in its chatbot phase. Ask a question. Get an answer. Copy, paste, tweak, and then figure out what to do with it. Useful? Absolutely. But let’s be honest… the human is still doing most of the heavy lifting.

Agents are different.

They’re built around action, not just output. Instead of simply generating text, an agent can pull the right information, apply logic, use connected tools, and move part of a workflow forward. That’s one of the clearest shifts happening in AI right now.

And OpenAI isn’t being subtle about it. Its current language centers on task completion, tool use, and bounded autonomy. The bigger idea? AI coworkers. Systems that can work within permissions, shared context, and organizational guardrails. That matters.

Because the real opportunity for physicians may not be better answers on a screen. It may be AI that quietly takes friction out of the work around medicine.

If you want a physician-focused example of where this is heading, look at how doctors are already experimenting with ChatGPT agent mode.

Think of it like this, so it is easier to imagine:

- A chatbot helps draft a follow-up message after a patient visit.

- An agent could potentially pull the visit summary, generate a patient-friendly instruction set, flag missing labs, prepare a message draft inside the workflow, and route it to the physician or staff member for review.

- A chatbot can explain prior authorization requirements.

- An agent could eventually check plan rules, gather required chart elements, draft the justification, and prepare a submission packet for human approval.

- A chatbot can summarize a long thread in the inbox.

- An agent could sort messages by urgency, draft responses, identify which items need physician review, and suggest what can be delegated.

This is why the distinction matters.

Physicians are not drowning in a lack of information. They’re drowning in fragmentation… clicks, context-switching, and low-value work that keeps piling up. The appeal of AI agents is not that they’re magically smarter. It’s that they may reduce the number of manual steps between intention and execution.

But physicians should be careful here.

Not every tool labeled an “agent” actually deserves the name. In plenty of cases, it’s still just a chatbot with better packaging. Same engine, nicer box. The real test is pretty simple: can it reliably move a task forward across systems or decisions, within clear limits, without creating more review work than it removes?

That question matters even more in healthcare, where complexity is high, and mistakes have real consequences. Which btw, if you haven’t read it already, here is some great info to know how physicians can spot bad AI tools before adopting them.

| AI Chatbot | AI Agent |

|---|---|

| Responds one prompt at a time | Can take multiple steps in sequence with less hand-holding |

| Mostly gives information, suggestions, or drafts | Can retrieve data, use tools, trigger actions, and move workflows forward |

| Usually needs the user to direct every next step | Can operate with more autonomy within defined guardrails |

| Brainstorming, writing, summarizing, explaining | Automating repetitive workflows, coordinating tasks, and executing processes |

2. The biggest opportunity is not replacing physicians. It is reducing workflow drag

When people hear “AI agent,” they usually swing to one extreme or the other. Some picture AI diagnosing patients, running clinics, and replacing physician judgment. Others roll their eyes and assume it’s just another shiny buzzword slapped onto old software.

The more realistic view sits somewhere in the middle. The strongest near-term use case for AI agents in medicine is not physician replacement. It’s workflow relief.

Healthcare is already getting a preview of that with ambient AI documentation. Physicians are adopting it because it solves a real problem. Not because it’s flashy. Not because it sounds futuristic. Because it helps with documentation and administrative drag… and that matters.

AMA reporting has also highlighted health systems using ambient tools at a large scale, with one example citing 2.5 million uses in one year and roughly 15,000 hours saved. And that lesson is bigger than scribes.

The real value of healthcare AI often shows up when the technology reduces repetitive friction around the clinical encounter rather than trying to replace the encounter itself. An AI agent that helps prep charts, surface care gaps, summarize relevant history, draft patient education, organize follow-up, or handle simple admin steps may be far more useful than one making grand promises about autonomous medicine.

In a lot of cases, the real win is pretty boring. It’s just working smarter with AI instead of adding one more disconnected tool to an already messy system. (Healthcare has enough of those already.)

Simply put, the opportunity is not “AI doctor.” It’s less wasted physician energy.

That matters because physicians are expensive, highly trained decision-makers. Every hour spent on fragmented administrative work is an hour not spent on diagnosis, patient communication, leadership, strategy, procedural skill, or rest. And even modest efficiency gains can create real leverage when they happen over and over across the week.

For independent physicians, practice owners, and entrepreneurial doctors, this matters even more. Agent-style tools may eventually help lean teams operate with more precision. A smaller practice could manage communication, intake, documentation support, patient reminders, and internal coordination more effectively without having to expand headcount at the same pace. That becomes even more interesting when you stop thinking about a single AI app and start thinking about an AI-enabled team.

That does not mean staffing disappears. It means some workflows become more scalable.

For employed physicians, the value may look a little different. Better tools could mean less after-hours charting, less inbox overload, and less task sprawl. Maybe even a workday with less cognitive clutter… which, honestly, sounds pretty great. For some, that may even pair well with using AI and virtual assistants to take lower-value tasks off their plate.

Either way, the real question is not whether AI agents sound impressive. It’s whether they meaningfully reduce drag.

That is how physicians should evaluate them:

- Does this save time?

- Does it reduce task switching?

- Does it improve quality?

- Does it lower burnout pressure?

- Does it help the right human stay focused on the right level of work?

If the answer is no, then the tool may be interesting, but it is not leverage.

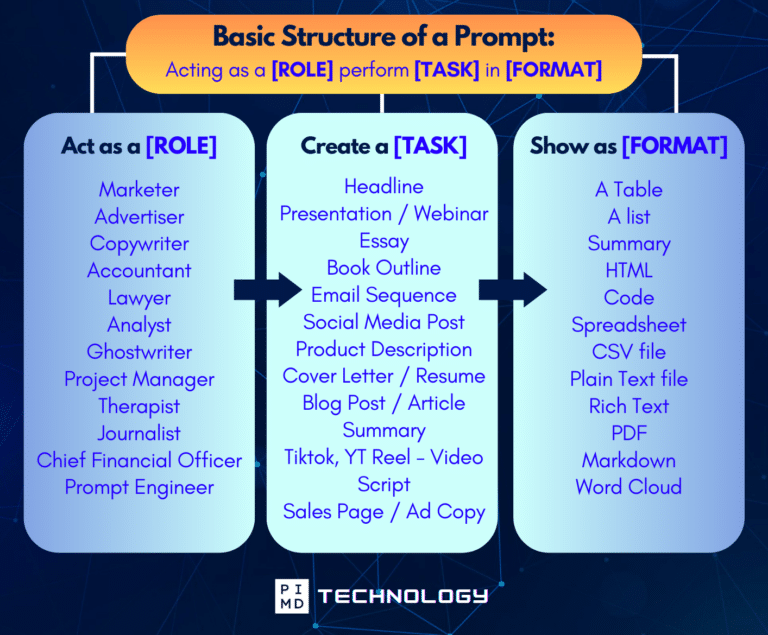

Unlock the Full Power of ChatGPT With This Copy-and-Paste Prompt Formula!

Download the Complete ChatGPT Cheat Sheet! Your go-to guide to writing better, faster prompts in seconds. Whether you’re crafting emails, social posts, or presentations, just follow the formula to get results instantly.

Save time. Get clarity. Create smarter.

3. Physicians should care, but they should care carefully

The case for paying attention to AI agents is strong. The case for trusting them blindly? Not so much.

Do not ask, “Is AI good or bad?” Ask, “Where is this safe, useful, and worth the tradeoff?”

Healthcare is a high-stakes environment. Even when AI sounds polished, physicians are still responsible for what they sign, what they approve, and what touches patient care. That is exactly why physicians also need to understand the compliance side of using AI without getting sued.

Well, how do we use it then? Your mindset around AI agents should be practical, not ideological.

Some tasks are obviously better fits than others. Low-risk, repetitive, rules-based, reviewable work is the natural starting point. Think scheduling logic, information gathering, message drafting, documentation support, chart summaries, and process coordination. These are the kinds of tasks where speed and consistency matter, and where a human can stay in the loop without having to rebuild the whole thing from scratch.

Higher-risk clinical judgment is different. Nuanced decisions, patient-specific reasoning, and anything with meaningful downstream consequences should clear a much higher bar. In those settings, AI may be useful as support… but not as a substitute for judgment.

This is also where governance matters.

The real question is not just what an agent can do. It’s what it should be allowed to do, what data it can access, how its output gets reviewed, and what happens when it fails. That’s not boring compliance talk. That’s the whole ballgame in healthcare.

So what should physicians watch for?

- First, be skeptical of vague claims. If a vendor says a tool is “agentic” but can’t clearly explain the workflow, oversight, failure points, or review process, that’s a red flag.

- Second, look for narrow wins before big promises. A tool that reliably fixes one annoying workflow is usually more valuable than one that claims it will reinvent the entire practice. (We’ve all seen that movie before.)

- Third, measure the supervision burden. If an agent saves five minutes but creates ten minutes of verification work, it’s not helping.

- Fourth, don’t confuse a polished interface with real capability. A slick demo is nice. Operational reliability is nicer.

- And fifth, remember that strategy matters more than novelty. The physicians who benefit most from these tools will probably be the ones who understand their workflow problems clearly before they go shopping for solutions.

Long term, that is part of becoming one of the AI-literate doctors who will win. That may be the most important mindset shift of all.

AI agents are not a reason to abandon professional judgment. They’re a reason to become more intentional about where your judgment is most valuable.

Final Thoughts

So, should physicians care about AI agents? Yes… But not because they’re trendy.

Physicians should care because AI is moving from conversation to execution. The next phase of useful healthcare AI will probably be less about asking smarter questions in a chat window and more about reducing friction in the messy, repetitive workflows around patient care. That’s where things start to get interesting.

And frankly, that’s where things start to get useful.

This does not mean medicine is about to be handed over to autonomous systems. Let’s not get carried away. It means the tools around medicine are becoming more capable, more operational, and potentially more helpful.

For physicians, the smartest response is neither fear nor blind enthusiasm. It’s discernment.

Understand what an agent is. Look for practical use cases. Focus on workflow leverage. Demand guardrails. Keep humans accountable. And learn to tell the difference between an impressive demo and something that actually creates value in the real world.

The physicians who do that well won’t just “use AI.” They’ll use it in a way that protects judgment, preserves trust, and creates more room for the work that matters most. And honestly, that’s the goal.

But what do you think? Let us hear your thoughts down in the comments!

Download The Physician’s Starter Guide to AI – a free, easy-to-digest resource that walks you through smart ways to integrate tools like ChatGPT into your professional and personal life. Whether you’re AI-curious or already experimenting, this guide will save you time, stress, and maybe even a little sanity.

Want more tips to sharpen your AI skills? Subscribe to our newsletter for exclusive insights and practical advice. You’ll also get access to our free AI resource page, packed with AI tools and tutorials to help you have more in life outside of medicine. Let’s make life easier, one prompt at a time. Make it happen!

Disclaimer: The information provided here is based on available public data and may not be entirely accurate or up-to-date. It’s recommended to contact the respective companies/individuals for detailed information on features, pricing, and availability. All screenshots are used under the principles of fair use for editorial, educational, or commentary purposes. All trademarks and copyrights belong to their respective owners.

If you want more content like this, make sure you subscribe to our newsletter to get updates on the latest trends for AI, tech, and so much more.

Further Reading